Fear—or the unhappy feeling of never truly being heard when one is a researcher working on major societal issues—is very widespread. More rarely do researchers express the fear that their work might in fact be counterproductive and may actually contribute to exacerbating the very problems they study. I often feel this fear with Life Cycle Assessment (LCA), and more generally with the modeling of relationships between Humanity and the Environment.

This fear troubles me, because when I was younger I was haunted by Sartre’s concern—What does it mean to write in a world that is hungry?—which resonated deeply within me and led me to choose a discipline directly engaged with the world and its suffering. Paradoxically, this anxiety may in fact be amplified, because literature would at least serve beauty. If modeling and quantifying the world did not even change it, then it would be a perfectly odious occupation. I think here of Marx’s last thesis on Feuerbach, criticizing the idealism and incomplete materialism that occupied the first half of the nineteenth century in philosophy: « The philosophers have only interpreted the world, in various ways; the point, however, is to change it. » (Marx,1845).

The oppositionality of science

The powerful Church fought the rise of the natural sciences, burning Giordano Bruno, imprisoning Galileo, and opposing Darwin, because these sciences challenged its mythology and therefore its power. The rise of the bourgeoisie, by contrast, glorified the natural sciences because they enabled the development of productive forces, increases in productivity and the associated profits, and therefore the power of the bourgeoisie.

Sociology, if it is not placed in the service of advertising, the manufacture of consent, and the maintenance of order, is the enemy of the bourgeois order because it sheds light on the falsity of capitalist and neoliberal myths. Human and natural sciences share a common quest for knowledge that illuminates the functioning of the world. Yet most of the knowledge produced by the natural sciences does not directly oppose the bourgeois order, which can instead use it to its advantage.

By contrast, the social sciences that study social organization undermine the fables that sustain the bourgeois order: meritocracy, the “value of work” (the work of others), the supposedly natural necessity of social inequalities, racism, and so on.

In seeking truth, these sciences enter into opposition with the false world described by Adorno and dear to Lagasnerie. They are oppositional simply by striving to reveal truths buried beneath the alienation and domination that shape the relationship of human beings to the world within their social organizations. To engage in a pursuit of truth is already to take a position of opposition. The pleonasm of the “committed intellectual” or the “engaged scientist” then becomes apparent.

Today, the most oppositional of the natural sciences is perhaps environmental science. Astronomy no longer has the same disruptive potential as it did in the time of Giordano Bruno; current systems of domination no longer rest on biblical geocentrism. The collapse of living systems and of the Earth’s habitability conditions, however, constitute far more troubling truths for the capitalist order.

Climate science and environmental science more broadly are among the primary targets of the fascist MAGA movement—the culmination of an exacerbated form of American capitalism, whose temporary ambassador George Bush was already proclaiming at the 1992 Rio Summit that the “American way of life is not negotiable.”

The social sciences are also violently attacked, including in France—albeit to a different degree—where the hunt for “Islamo-leftism” (a vague conspiratorial concept, the new “Judeo-Bolshevism” of the era) within universities has become a recurring theme. This hunt reached a peak with the 2021 announcement by the French Minister of Higher Education and Research, Frédérique Vidal, calling for an investigation into the supposed presence of this movement within French research.

The suspicion directed at academics—who are accused of knowingly producing discourses in the service of the “parasites” that are the most dominated social groups, and of acting as agents of division of national unity and enemies from within—is a fundamental component of the reactionary dynamic of late capitalism, which readily embraces anti-intellectualism.

Given this context, how is it that a scientific discipline such as industrial ecology—and more specifically Life Cycle Assessment (LCA)—is so legitmized and aligned with the spirit of the times?

Born in the 1980s and solidly theorized in the 1990s, LCA rapidly conquered academic, industrial, and institutional spheres, as evidenced by the multiplication of LCA consulting firms, dedicated departments within large companies, and legislative efforts such as the Product Environmental Footprint initiative at the European Union level. Given the context described above, one might expect that a discipline combining environmental science, management and economic sciences, and the social sciences would face strong opposition. On paper, LCA and its developments constitute a science of the environmental impacts of our productive system and could therefore pose problems for the owners of the most polluting industries. Likewise, the need to mobilize environmental ethics—essential for determining the protection areas considered and the weightings between environmental impacts—as well as accounting, economics, and even other social sciences in its most advanced developments, could make LCA modeling a particularly oppositional field. Such oppositionality, once again, would not require any additional commitment: the simple act of revealing the falsity of the world already makes scientific practice oppositional in fact.

Yet the enthusiasm surrounding LCA seems to indicate broad acceptance of the practice. This does not mean that LCA cannot be oppositional, nor that it never is, as we will see. Nevertheless, in its most common practice it threatens nothing that is established and is largely harmless to the structures that exacerbate the destruction of the world and of sentient beings. Let us therefore try to understand why—and how it might become otherwise.

A Responsability narrative

In the overwhelming majority of cases, LCA attributes impacts to products or services, or to the demand for them, and the impact is ultimately borne by the final consumer. The associated worldview is therefore compatible with the neoliberal discourse of a market in which companies merely respond to the demands of perfectly free individuals whose consumption may be more or less virtuous. “If it didn’t sell, people would simply stop buying it,” and if we determine what should not be bought, we can tell people. If they still buy it, they are morally at fault. The company selling the product is not at fault at all; it is even virtuous, since it responds to consumer demand. The notion of the personal environmental footprint assigns each individual “their impact,” and this impact comes solely from their consumption. A shareholder who pressures a company to launch marketing campaigns in order to boost product sales will not see their environmental footprint increase. But why not? If we are willing to say that a consumer is responsible for the impacts associated with buying a product, it is because we assume that without their demand the product and its impacts would not exist. This counterfactual vision notably dominates the consequential LCA approach, where an actor is responsible in proportion to the consequences of their decision (Weidema et al., 2018). Consequences are defined by invoking a counterfactual situation in which the studied decision did not occur. If shareholder pressure leads to 1,000 additional consumers buying the product, the counterfactual world without that pressure would indeed be a world with lower impacts. The impacts normally attributed to each of these new consumers should therefore belong to the shareholder’s footprint, since without him they would not have become consumers. But perhaps that shareholder exerted pressure to increase dividends because he feels unloved by his father, who always made it clear he preferred the brother who succeeded better in business. In that case, the father becomes responsible for the impacts of those 1,000 consumers, because without his behavior his son would not have acted in that way.

As we see, causal chains are infinite, and determining consequences requires constructing counterfactual scenarios to which we do not actually have access. What would the son have done if his father had shown him more affection? Who can say he would not have developed a superiority complex instead of an inferiority complex—pushing him to extract even more dividends from the company? The notion of responsibility is constitutive of the fictions upon which our social order rests. It may be a necessary fiction for some, but it is not a truth of the physical world. As Weidema et al. (2018) note, there is no objective way to define where responsibility ends. This is why ISO 26000—defining responsibility (in the sense used for Corporate Social Responsibility) through the notion of a sphere of influence—remains debated within international institutions responsible for implementing ISO standards. A UN-mandated report intended to clarify the concept concluded in 2008 that the notion of sphere of influence is “too broad and ambiguous a concept to define the scope of due diligence with any rigour,” because it conflates two different meanings of influence: impact (causing harm) and leverage (the capacity to influence actors causing harm) (Ruggie, 2008). The rapporteur nevertheless defended the notion as a “useful metaphor,” thereby highlighting the fictive, metaphorical, and retrospectively constructed nature of responsibility.

Here again one can turn to penal abolitionism to move beyond the concept of responsibility. The parallel is all the more relevant since consumer responsibility—as commonly framed in LCA—is almost synonymous with consumer guilt. Certain LCA practices—particularly attributional approaches used for environmental labeling and personal footprint calculators—resemble police investigations seeking to determine who is responsible for environmental damage. Sociology shows that it is illusory to manipulate notions of responsibility and guilt to understand or prevent events labeled “criminal,” which are merely emergent outcomes of complex social situations. If individuals placed in the same conditions of existence tend to produce similar actions, it is pointless to attribute responsibility to individuals, because the situation itself is the cause. Durkheim showed that suicide rates remain stable under similar conditions of existence, demonstrating that suicide is more a social than an individual reality.

Industrial ecology likewise shows that it is futile to isolate responsibility through consumption, since all economic and environmental flows are interconnected within an economy. Each product contains the entire economy. Every service relies on the present and past society that makes its production possible. In The Wealth of Nations, Adam Smith showed how a simple wool coat embodies the labor and ingenuity of an entire society. A Bic pen contains the accumulated knowledge of thousands of generations and millions of hours of labor, merchandized or not. All of human life is in the pen, and attributing a share of environmental impacts to it—and to its consumer—is necessarily normative and open to debate.

Where penal abolitionism proposes zemiology—a science of and attention to harm and heal rather than guilt—LCA could be used similarly by abandoning the search for responsibility. It would then take a purely consequential form, abandoning normative debates about responsibility allocation and focusing on the consequences of any decision, not only consumption decisions. But such an LCA would not occupy the entire space of ecological and social planning. Every decision has consequences, but every decision emerges within a socially determined system—the domain of politics. The fundamental responsibility that should interest us is that of structures, and therefore of the individuals who primarily maintain those structures. An LCA study showing that A has less impact than B should highlight that institutions, power relations, domination, and habits that lead to greater consumption of B are responsible for the additional impacts. LCA would thus shift from a consumption-centered approach to one centered on decisions and structures. Following Lagasnerie’s inspiration, justice could behave like medicine. Just as interpersonal violence should be understood through its rate of occurrence—much as influenza is treated not as an individual issue (who cares about who infected you ?) but as a matter of epidemiology—environmental impacts should be approached collectively.

LCA helps us understand causal relationships within structures, and we change the structures to modify those causal relationships. The culmination of this trajectory would be the emergence of an LCA adapted to planned and democratic economies. Democracy, emancipation, have to extend to production itself, as happened in France with the creation of social security, driven by communists and the CGT. It is too rarely emphasized that our so-called democracies allow quasi-feudal domination to persist in the sphere of production. People living in democratic regimes spend the overwhelming majority of their time at work under an anti-democratic regime where they have no say in what they produce, why they produce it, or how. Once society collectively decides what to produce and how to produce it, the notion of individual responsibility through consumption disappears. In a democratic and planned economy, the causal link between consumption and impact is broken, because production levels are not determined by aggregated demand signals—nor are they fully determined by them in the chaotic capitalist economy, which oscillates between overproduction and crises—but by deliberation and planning.

In such an economy, there is no environmental impact associated with eating, housing oneself, or traveling. There is only an impact associated with the social organization required to feed, house, and transport people.

Quantification, mystification and governance

The appeal of LCA, including my own attraction to it, comes from its capacity to provide quantification, numbers. One may hastily conclude from the result of a single LCA that an alternative A is 2.5% less impactful than an alternative B. Without complementary analysis, using this result to support the claim that choosing A rather than B already constitutes “a step in the right direction” is a mystification. These 2.5% are completely negligible compared with the many uncertainties at play. Without uncertainty and sensitivity analysis, we miss the fact that altering a single minor assumption, based on almost nothing, could make A appear 50% more impactful than B. And if the difference were 25%, 250%? I would be tempted to say that it still does not have much more value without complementary analysis. If one wants to produce numbers through analysis, one should at least try to produce them in abundance, test as many hypotheses as possible, study the entire space of possibilities, and understand how the conclusion reacts to the assumptions. The interest of a study lies primarily in the quantitative understanding of the system under study, not in the final impact result which, taken in isolation, means nothing.

So can a good uncertainty and sensitivity analysis proctect us from this mystification? It all depends on how it is carried out. It appears clear to me that an uncertainty analysis alone, providing distributions of numbers resulting from the propagation of uncertainties defined on our input parameters, does not allow us to demystify. If a single number is mystifying, ten thousand numbers forming a distribution representing the model’s output uncertainty may be ten thousand times more mystifying. Where the single number clearly appears insufficient and only misleads the novice, a beautiful distribution gives the appearance of well-executed work and lowers our guard. By adding a mathematical layer, one can increase the seductive power of modeling. We now have at our disposal many additional numerical indicators: mean, median, quantiles, probability, etc. But none of this overturns the motto of modeling: “GIGO”, Garbage-In, Garbage-Out. Only global sensitivity analysis will tell us how the garbage-out depends on the garbage-in. One could say that the work of understanding the uncertainty and sensitivity of a model consists in acknowledging and showing the extent to which the results are conditional. Every result is conditional; every probability produced by the model is conditional on all the assumptions, on the absence of unforeseen events, on the persistence of the economic system, etc.

A global sensitivity analysis pushed to the structure of the model itself, and not only its parameters, therefore constitutes the maximum that can be done while remaining within the domain of quantification. But it is remarkable that a number of researchers—mathematicians and statisticians—who have shaped the jewels that are computational global sensitivity analysis methods are also engaged more broadly in advocating epistemic and political caution regarding the use of models and numbers. Andrea Saltelli, one of the central figures of sensitivity analysis, participates in particular in the development and defense of an ethics of quantification (Saltelli et al., 2020). This ethics is described as the effort of “iterative illumination of the obfuscation associated with legitimization through quantification” (Sareen et al., 2020). It is therefore an operation of demystification, carried out by specialists of quantification themselves, fully aware of the hypnotizing potential of numbers, whether they are produced by descriptive statistics of the “real” (which takes shape through quantification) or originate from “Model-Land” (Thompson & Smith, 2019).

Quantification used both to illuminate and to legitimize decision-making constitutes a particular anthropology, which the jurist Alain Supiot examines brilliantly in a series of lectures at the Collège de France later organized into a book entitled La gouvernance par les nombres (2015). This governance represents the end of government by human beings, by law, by justice, and replaces it with the management of humans through quantification and the evaluation of numerical targets. Supiot highlights the historical rupture represented by the use of mathematics to decide and govern, rather than to decipher the language of nature. The development of statistics pushed forward by state administrations constitutes an archetypal example. This governance by numbers appears wherever the political retreats in order to make room for management. It is essential to the legitimation of the extreme center (Serna, 2019), which hides its extreme class politics behind a technocratic discourse mobilizing numbers. The obsession with GDP growth as an indicator of the health of society, or the absurd and perfectly arbitrary rule of the 3% deficit allowed to European states, are two striking symptoms (Priewe, 2020). The field of possibilities, the realm of politics, is limited by the attainment of numerical targets defined by these indicators. Governance by numbers as practiced in late capitalism is the political mode of the end of history: the communist project has been eradicated, no political project threatens the extension of capital anymore, and all that remains is to keep an eye on the machine’s counters, modulating the fundamental variables of capitalism indefinitely, “responsibly”. This “responsibility”, another essential component of the discourse of the extreme center and of the right in general, is that of the accountant answerable before their employer, which is capital.

While writing this text, I pause and see on my phone that a poll shows that 58% of French people would be favorable to having a boss as head of government, and that 68% think that governments do not understand the realities that entrepreneurs face. It should be noted in passing that polling and the creation of public opinion (which does not exist, cf. Bourdieu) are obviously perfect illustrations of the legitimizing role played by numbers. The accompanying article on BFMTV demonstrates perfectly the quality of an embarrassing pro-capitalist propaganda piece disguised as analysis. Still, since the content and “result” of the poll serve my argument, I decide to believe that it says something about society. For what better demonstration of the “flattening of the world” described by Supiot could one find? Politics should supposedly be nothing more than good management of the “realities” that naturally impose themselves on private firms, the only valid and natural collectives of production. Beyond the obvious implication—“we want a boss as president so that he finishes dismantling labor law, reduces the ‘cost of labor’, and removes every obstacle to the infinite accumulation of profit”—the most serious aspect is probably the dramatic impoverishment of the ambitions of politics in such a vision. Politics is not supposed merely to “know realities” in order to steer their evolution within a given economic and social system; it is supposed to produce those realities.

But is governance by numbers intrinsically associated with capitalism? Not at all. In Anti-Dühring, Engels (1878) describes the phase of the withering away of the state after the dictatorship of the proletariat. Lenin devotes many pages to this in The State and Revolution (1917). Once the bourgeois state is abolished, the dictatorship of the proletariat takes control of the “special power of repression” that the state constitutes (Engels). This temporary dictatorship, as opposed to the permanent dictatorship of the bourgeoisie, allows the establishment of the classless communist system, but once this system is active, the state structure and the bureaucracy dedicated to its maintenance wither away by themselves. There is no longer a class system to preserve, and the state as a tool for preserving this system disappears. Then begins a new era that interests us here: “The intervention of state power in social relations becomes superfluous in one domain after another and then naturally falls asleep. The government of persons is replaced by the administration of things and the direction of the processes of production. The state is not ‘abolished’; it withers away.” (Engels 1878, pp. 301-303 3e German edition) This administration of things is a governance by numbers, of which the Soviet Gosplan, responsible for planning the economy, represents an obvious example. Yet the Soviet state, understood as this special power of repression, will never be able to wither away and leave place to a simple administration of things. In Stalin’s USSR, bureaucratization, that is the permanence of a separate class of bureaucrats confiscating workers’ democracy for its own interest, constitutes a perversion of the seminal writings of Engels, Marx and Lenin, who expressed opposition to such a dynamic during the rise of the dictator. This bureaucratization, together with the struggle for survival—first against the tsarists, then against Nazi Germany, and finally against the capitalist world during the Cold War—will confiscate democratic governance by numbers. Despite its perversion, Soviet governance by numbers transformed a backward feudal tsarist state into a world power where health, housing and education were provided to millions of Soviets. Communist governance by numbers enabled planned economies to dominate capitalist powers on many indicators of human development, such as health, education, housing, women’s emancipation, etc., as demonstrated by studies examining these variables (Cereseto & Waitzkin, s. d.; Lena & London, 1993; Navarro, 1992). The socialist obsession with quantification is contained in Lenin’s enthusiasm in his speech to the Petrograd Soviet in 1917: “Socialism is accounting. If you want to record in the accounts every piece of iron and cloth, then that will be socialism” (Lenin 1955, t. XXVI, 261).» (Mespoulet, 2012). Quantification and the communist scientific approach to society, going as far as futuristic proposals—too advanced for the technology of the time—of cybernetic computer management of all flows in society, were accompanied by an interest in variables that went beyond simple accounting of economic flows. Soviet statistics examined literacy, leisure, intra-family economics, the liberation of free time, the management of time in households, or the evolution of time devoted to domestic tasks, notably with the aim of freeing women’s time so that they could join social and political activities (Mespoulet, 2012). Above all, the communist project aimed, at least on paper, to democratize statistics:

“In capitalist society, statistics were the exclusive domain of ‘state people’ or narrow specialists; we must bring them into the masses, popularize them so that workers gradually learn to understand for themselves and to see how and how much work must be done, and how and how much one can rest…” (Lenin, 1955) (Mespoulet, 2012).

Unfortunately, it was not this democratic vision of knowledge and politics that would prevail in Stalinist USSR. Yet this inspiration of Lenin already constituted an intuition of the ethics of quantification, and it echoes the magnificent call by Funtowitcz and Ravetz: “The demystification of the mathematics of uncertainty is therefore a central part of the programme for the democratization of scientific expertise.” (Funtowicz & Ravetz, 1990). The bureaucracy as the dominant class of the USSR, together with permanent war, both internal and external, would prevent this demystification and transform economic indicators into ends rather than means, leading to the falsification of numbers by various politico-economic actors in order to avoid the sanctions of the nomenklatura.

I do not think that governance by numbers is intrinsically a problem, a position not very surprising for a modeler who moreover wishes to see a new world emerge. Counting things is the prerogative of any system of economic organization and explanation of the world. More than explaining the world, it makes it appear. When Auguste Comte began quantifying society in the nineteenth century, he made society appear as such, as the object of “Social Physics”, the ancestor of sociology. When Élisée Reclus quantified interactions between humans and their environment, he made the environment appear as such, calling “Social Geography” the ancestor of ecology. LCA makes visible the interdependence between the production of society and the destruction of the environment and of humans, and it can inform the trade-offs involved. It can produce an oppositional vision of the world if it does not content itself with providing metrics serving the governance by numbers of capitalism. As a formidable machine capable of producing numbers by millions, LCA can also serve the project of mystifying quantification characteristic of capitalism and authoritarian bureaucratic communism. A difference in impact of 2% can become a marketing argument, a reduction of 3% can serve as proof of the “greening” of an oil company, and more seriously, methodological choices can make the conclusions of an LCA vary in one direction or another. There is no problem with methodology being the subject of scientific discussion. The problem arises, for example, with the multiplication of Product Category Rules (PCRs), distinct sets of methodological principles to follow in conducting an LCA (Konradsen et al., 2024). These PCRs serve to produce Environmental Product Declarations (EPDs) with administrative value and on which legislation applies, and could apply even more strongly in the future. With around twenty organizations in Europe able to issue their own PCRs for their own sectors, resulting from compromises between administration, science and industry, the variability of possible EPDs explodes (Konradsen et al., 2024). Here again one can mobilize Supiot, who underlines how the “Law and Economics” doctrine of the Chicago Boys (Hayek, Friedman, etc.) turned law and regulation into commodities like any other. There then exists a global supermarket of laws and regulations, and these no longer constitute a heteronomous third party produced by political decisions that frames the economic activities of private agents. They are brought onto the same plane as all other economic objects and resources. Capital can therefore relocate to access more flexible regulations, just as it moves toward more exploitable labor. Tax havens are an example of countries that have dedicated their economies to “producing” attractive regulation. LCA and its PCRs may become a new aisle in this supermarket. To caricature—but not that much—one might choose to classify a glass façade as a window rather than a door in order to depend on more advantageous PCRs and artificially “reduce” the environmental impact of one’s product.

Prospective and the closing of the future

One of the greatest potentials for mystification in LCA lies in its prospective branch, which is the one I work on the most. As Bohr (or some other Dane) said, “Det er svært at spå, især om fremtiden,” meaning “It is difficult to predict, especially about the future.” A version that works better here (Bohr was very cool but did not do LCA and merely laid the foundations of quantum physics) would be to say that the whole challenge of modeling, quantification, and their use—whether scientific, political, or philosophical—is amplified in prospective applications. Modeling in non-prospective LCA already “predicts” something about the current world, but modeling prospectively “predicts” doubly. As is customary, I write in my papers that “Prospective LCA does not predict the future, but proposes visions of possible futures according to blabla.” But I do not really believe it. I am not claiming to predict the future, but I do believe that it is indeed, in effect, what we do. Science is a performative activity, whose statements about the world it studies act upon that world. Bourdieu says this clearly (for once) and at length (as always) in Language and Symbolic Power (Bourdieu, 1991):

“How can one fail to see that forecasting can work not only in the intention of its author, but also in the reality of its social becoming, either as a self-fulfilling prophecy, a performative representation capable of exerting a truly political effect of consecration of the established order (and all the more powerful for being widely recognized), or as an exorcism, capable of eliciting actions aimed at refuting it? […] The most neutral science exerts effects that are by no means neutral: thus, merely by establishing and publishing the value taken by the probability function of an event, that is, as Popper indicates, the propensity of that event to occur, an objective property inherent in the nature of things, one can contribute to strengthening the ‘pretension to exist,’ as Leibniz said, of that event, by determining agents to prepare for and submit to it, or, conversely, to mobilize to counter it, using knowledge of the probable to make its occurrence more difficult, if not impossible.” (p. 197)

With prospective LCA, this performative effect is evident, and stating a probability of occurrence of an impact in the future modifies that probability itself, making it impossible to capture. More precisely, it renders the stated probability conditional on its statement having no effect on the course of events—but then what use is it? This illustrates a tension specific to prospective LCA, which I began to touch upon in a paper (Jouannais et al., 2024) and in a few other places, namely the need to position the study relative to the actors whose actions will affect the system being studied. If one claims that the goal of prospective LCA is to guide technology developers in R&D from the earliest stages (van der Giesen et al., 2020), then one assumes these developers will indeed follow the guidance; otherwise, the exercise is pointless. In this case, there is no uncertainty (probability = 1) about the choices of developers, and more broadly, of all involved actors during the development phase. The future and final form of the studied system is, by definition of the LCA goal, the form toward which the study guides the actors. It is unknown at the start of the study, but the study’s purpose is precisely to determine it. Probability and uncertainty remain relevant for the evolution of the system that does not respond to our guidance, which we then classify as background and which follows more or less uncertain scenarios. Prospective LCA then informs the Future of the system, on which one can act, while depending on the Becoming of the background, roughly the global economy, which follows its own course. For Arendt, the future is opened by action but the becoming escapes control; for Deleuze, becoming is not a future to reach but a process of transformation. To avoid mystification, prospective LCA (and prospective modeling in general) must position itself with respect to its vision of the future: where is the Future, where is the Becoming? In its use to “guide” development, LCA reserves the Becoming for the background, which is determined by the socio-economic dynamics modeled by IAMs. The Future is for the foreground, where actors act based on LCA results. Two problems arise here: 1) assuming foreground actors have no Becoming, and 2) assuming the background, i.e., the global socio-economic organization, has no Future.

The first problem arises from denying the economic agency of actors under capitalism who are supposed to be guided by LCA. The most obvious version of this problem is to assume that an actor will follow the environmentally optimal option once informed, making this option the “prediction.” This artificially aligns LCA producers with system producers, even though the latter are determined in their becoming, and therefore in their choices, by their position within the social relations of the economic system that impose profit-seeking. The denial of Becoming is blatant when the studied system is not tied to an explicit economic actor but is treated as a pure technology floating outside any economic system or agency.

In my thesis, I focused on the future environmental impact of a hypothetical technology that would provide a bioactive molecule produced by microalgae to farmed fish to improve their health. Neither the molecule nor the microalgae had been discovered, and I wondered whether it was possible to anticipate the environmental impact of this technology to decide if it was worth pursuing these molecules. In this use of LCA for planning, aiming to direct investments of time and resources into certain developments (i.e., is it worth starting to search?), I could not limit myself to defining possible future scenarios. That would have involved producing distinct scenarios, calculating their impacts, and presenting them as all more or less possible, as images of futures the technology could take. Such an approach would neglect the Becoming of the technological development, the chaotic sequence of events separating a lab concept from industrial market deployment. Considering this Becoming requires an external view of the development process and attempting to capture its probabilistic signal. Supporting research into this microalgal technology initiates an uncertain process that could lead to either large overheated photobioreactors for a high-value molecule in northern Germany with limited sunlight, or small low-consumption photobioreactors in southern Spain. These are not “available” scenarios chosen by actors but represent epistemic uncertainty regarding the sequence of events separating green-lighting the technology from its deployment in a certain modality. Without further information, one might conservatively consider two equiprobable geographical scenarios among many and produce a probabilistic signal of impact capturing the Becoming, as perceived at that moment, of this technology.

The second problem—the denial of the Future of global socio-economic directions of the background—is fully contained in the very notion of background: the theater of the world evolving on its own, according to its Becoming, onto which the small stage that is the foreground of our system is grafted. Let us recall that my point is not to say that the foreground/background division is useless or ineffective. The purpose here is to highlight how practice, even when scientifically sound and pragmatically intelligent, renders LCA compatible with the continuation of the current socio-political order. The background is by definition what we cannot control, and our studies merely adjust the parameters of our foreground systems while undergoing the march of the world. The associated discourse is, of course, compatible with political apathy. But what is this march of the world? It is modeled using the major advance that makes it possible to employ projections from Integrated Assessment Models (IAMs) to produce databases corresponding to projections of future economies (Sacchi et al., 2022). De Bortoli et al., (2025) provided a good discussion of the limits and dangers of using IAMs and SSP scenarios for prospective LCA. Beyond the issues with the models themselves, we can focus on the message conveyed by their use. Most widely used IAMs are optimization models based on neoclassical economics, assuming growth even in the “sustainable” scenarios. There is a real need to develop SSP-IAM pairs that incorporate marked politico-economic breaks, such as post-growth or planned, non-capitalist economies. The possible futures represented by SSP scenarios are very unimaginative and push us to seek “solutions” within a narrow space, within more or less status quo. Paradoxically, the possible space can appear more restricted when presenting six scenarios instead of one. With a single scenario, one understands the illustrative, incomplete, and partial value of the Becoming proposed. With six, it may seem that the entirety of possible Becoming is covered.

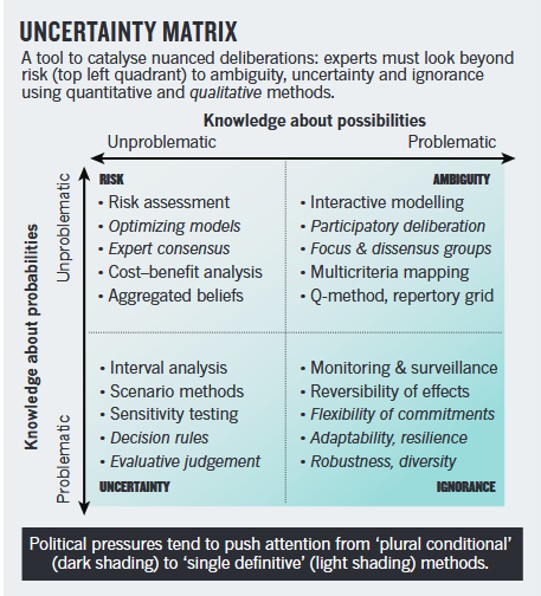

This feeling of completeness, of satisfactory coverage of the possible space, was addressed indirectly above in discussing the mystifying potential of distributions of impact values. More generally, presenting scenarios deemed probable because they are contained within the Becoming of our present organizations ultimately treats the future as a real object existing somewhere, to be circumscribed, effectively treating humanity’s future as a roll of dice. A roll whose associated probabilities depend on physical phenomena, constituting a mathematical experiment, thus denying the agency of human societies that act upon this roll. It would be entirely incoherent to claim that human societies are not mathematizable and partly predictable in their dynamics, but it is crucial never to close off the future we explore, distinguishing Becoming from Future and leaving significant space for the latter. In practice, keeping the future open requires resisting the technocratic injunction to treat the future as the subject of probabilistic uncertainty, one of the foundations of post-normal science (Funtowicz & Ravetz, 1993), and in particular Andy Stirling’s call. In Keep it Complex (Stirling, 2010), he reminds us that uncertainty should not be reduced to its probabilistic dimension, which, as Knight already noted (Knight, 1921), is not “uncertainty” since it is fully characterized (by probabilities). This probabilistic uncertainty, of the “risk” type, rarely applies to the questions posed to large models combining environment and society. These questions often involve deeper, non-probabilistic uncertainties, of the type ambiguity or even ignorance, which Stirling situates in his uncertainty matrix. A truly opposition-oriented LCA will always seek to present the uncertainties it manipulates according to this matrix.

Je pense qu’il existe un autre axe fondamental à cette matrice, qui représenterait le degré de contrôle qu’ont les personnes en charge de l’étude, et donc de la caractérisation de l’incertitude, sur les acteurs dont les actions futures vont en effet faire advenir les situations qui sont aujourd’hui incertaines. Cela signifie qu’il faut clairement situer la position des personnes caractérisant l’incertitude par rapport à celles qui ont « le pouvoir » de faire prendre certaines valeurs aux variables du modèle. En pratique, pour revenir à mon exemple sur la production de molécules microalgales précédent, le fait de ne pas savoir où les photobioréacteurs seront déployés en Europe est lié au fait que je, et nous, scientifiques, analystes, n’avons pas de contrôle sur le choix de cette localité une fois la molécule découverte. L’incertitude associée, qu’on choisira de traiter de manière probabiliste ou non, est due à l’absence de moyen de contraindre le développement, de s’assurer que le développement suivra les résultats de l’étude elle-même. Un tel axe est essentiel pour compléter la matrice de Stirling, mais aussi les distinctions plus courantes entre incertitude épistémique, dûe à un manque de connaissance, et incertitude ontologique ou aléatoire, dû au processus modélisé lui-même et qui est donc irréductible. Ces deux dernières distinctions, les plus couramment utilisées pour qualifier nos incertitudes en modélisation, omettent totalement de remarquer et d’indiquer la pluralité des subjectivités mises en jeu : il y a des phénomènes, certains sont incertains par essence (chaotiques), d’autres nécessitent qu’on les connaisse mieux. Mais qui est ce on ? En prospective, les « phénomènes » incertains sont en grande partie les décisions des acteurs. On pourrait appeler cet axe, l’axe de polycentricité, puisqu’il reconnait la multiplicité des acteurs et des intérêts qui forme un ensemble polycentrique nécessitant de dépasser une vision de l’incertitude comme une propriété des phénomènes et de leur connaissance pour en faire un objet naissant aussi de la confrontation d’une multiplicité d’acteurs. On remarquera que la caractérisation de l’incertitude comme irréductible ou aléatoire indique en fait ce qu’il faudrait obtenir pour la réduire : obtenez de la connaissance et vous réduirez l’incertitude épistémique, là où vous ne pourrez rien faire pour l’irréductible. De la même façon, une incertitude due à la polycentricité disparait lorsque que les intérêts de l’acteur qui fait advenir la valeur de la variable considérée s’alignent avec ceux des analystes statuant sur cette incertitude. Par exemple, plus l’économie tend à être planifiée démocratiquement, plus les intérêts s’alignent, plus « l’incertitude » associée diminue. Je m’arrête là car il faudrait que j’écrive quelque chose de plus opérationnel dans un véritable article. En ajoutant cet axe, limitant ainsi le potentiel de mystification associé à la caractérisation des incertitudes, on s’assure de ne pas fermer le futur en présentant des objets purement mathématiques et artificiellement débrassés de leur contenu politique.

Mais au-delà d’un traitement de l’incertitude qui ne ferme pas le futur, on peut marquer encore plus clairement la distinction entre avenir et devenir en faisant appel à la découverte de scénarios (Scenario-Discovery). Je l’ai mobilisée en ACV prospective dans deux articles scientifiques (Jouannais et al., 2024,2025) et l’idée générale est simple. L’inverse de la découverte de scénarios, c’est ce qu’on fait habituellement en prospective : on cherche à établir à priori des scénarios plus ou moins possibles, plus ou moins probables, en mobilisant un arsenal de techniques participatives, plus ou moins divinatoires. En pratique cela peut aussi consister à définir des lois de probabilités pour les paramètres d’un modèle et les propager par Monte Carlo pour obtenir un nuage de points en sortie que vous pouvez interpréter comme la probabilité d’impact (pour une ACV). La découverte de scénarios renverse le problème. Vous définissez ce que vous voudriez obtenir, par exemple que votre système soit moins impactant qu’un autre, et vous trouvez les scénarios (les groupes de valeurs des paramètres d’entrée) qui vous permettent d’attendre l’objectif. En pratique, il s’agit de simuler un très grand nombre de cas, sans connaissance préalable minimum si ce n’est des bornes minimales et maximales (sans aucune recherche et aucun expert supplémentaire je peux affirmer que la température moyenne de l’atmosphère en 2080 sera entre -20 et + 50 °C), et utiliser un algorithme pour rechercher les groupes de cas qui remplissent l’objectif. Le potentiel oppositionnel de cette approche est très grand. Il permet de ne pas se forcer à définir des probabilités mathématiques en entrée quand la situation ne devrait pas le permettre. On peut appliquer des probabilités en entrée là où le devenir domine, et appliquer l’algorithme là où c’est l’avenir qui règne. La méthode vous trouve alors des avenirs qui remplissent l’objectif voulu, en connaissant le devenir probabiliste sur certains paramètres. Vous pouvez alors utiliser les résultats de deux façons. Premièrement, pour guider notre avenir, c’est-à-dire travailler à faire advenir les scénarios découverts. L’incertitude probabiliste reste alors limitée aux paramètres sur lesquels on aura décidé que notre travail n’agira pas, typiquement les phénomènes naturels mal compris, les processus socioéconomiques sur lesquels on n’a pas la main etc. Mais il n’y a pas « d’incertitude » quant aux valeurs des paramètres de l’avenir circonscrits par l’algorithme ; ils sont ceux vers quoi on va. Vous pouvez aussi le faire sans aucune incertitude probabiliste, en traitant tous les paramètres de manière identique, susceptibles d’être circonscris par l’algorithme, et réfléchir à comment faire advenir ces situations. Et que faire s’il apparait que ces scénarios découverts apparaissent impossibles ? Très bien, vous avez déterminé que la probabilité d’atteindre votre objectif est de 0, alors que vous ne pouviez pas définir de probabilité en entrée. Il est bien plus facile de réfléchir à des probabilités pour un groupe de scénarios particuliers que de réflechir aux lois de probabilités, souvent dépendantes, pour chaque paramètre.

De telles approches permettent de démystifier l’incertitude et la prospective parce qu’elles sont aussi réinterprétables et réutilisables facilement. Je m’explique. Quand une équipe d’experts fait de la prospective et finit par publier une probabilité en sortie d’un modèle, même avec la plus grande transparence et honnêteté intellectuelle possible, elle cache malgré tout ses hypothèses, ses probabilités d’entrée dans les entrailles de ses modèles incompréhensibles par les gens. Même leurs collègues n’ont pas le temps d’aller tout regarder. La probabilité publiée apparait alors, de par la division du travail et les conditions de sa production, comme un objet mathématique fétichisé, c’est-à-dire qu’elle prend un rôle indépendant des hypothèses qui la soutiennent. Elle devient LA probabilité de l’événement, et plus seulement le résultat d’un travail avec une question et des hypothèses circonscrites. En d’autres termes, on oublie qu’elle est conditionnelle (P(Evenement | toutes nos hypothèses). Elle est répétée dans un premier article, dans un second etc., et on finit par entendre en fin de chaine du téléphone arabe qu’il y a 5% de chances que nous restions sous les 2°C avant 2100 (Raftery et al., 2017). Cette information telle quelle est inutilisable et dépolitisante parce que les probabilités en entrée du modèle sont autant sur des variables démographiques et socioéconomiques donc sur lesquelles on peut agir, que naturelles (réaction du climat aux émissions). Encore une fois, c’est expliqué dans l’article en lui-même, mais la probabilité une fois traitée par la sphère médiatique est fétichisée, et effectivement réduite à un objet mathématique de même nature que la probabilité d’obtenir un 6 avec un dé non pipé est de 1/6. Pour poursuivre sur cet exemple, les auteurs de l’étude ont ensuite publié un nouveau travail (Liu et Raftery, 2021) qui met en avant l’avenir plutôt que le devenir en montrant qu’il faudrait que les états augmentent leurs taux de réduction annuels des émissions de gaz à effets de serre de 80% pour espérer ne pas dépasser les 2°C. La probabilité de ne pas les dépasser si le taux correspondait à ce que les états avaient promis lors de l’accord de Paris (ils sont quasiment tous très en dessous des promesses) serait de 26%. Mais on voit bien déjà que cette probabilité et l’information associée est moins opaque et fétichisée : on a sorti un paramètre d’entrée du modèle de la probabilité pour déterminer la valeur qu’il devrait avoir pour obtenir l’objectif. On affirme donc un avenir, politique, décidable, plutôt qu’un devenir mathématique naturalisé. Le niveau supérieur serait justement la découverte de scénarios en sortant TOUS les paramètres sur lesquels on peut agir, et présenter les groupes de valeurs de ces paramètres qui permettent d’atteindre l’objectif. Même pas besoin de se casser la tête à trouver les corrélations entre certains, on les considère tous indépendant à priori et on présente ces groupes. Pas besoin surtout, de faire des hypothèses sur la croissance économique, sur la démographie, sur l’intensité carbone de la croissance, l’algorithme vous dira comment elles devraient être pour atteindre l’objectif. Tout le monde a alors accès aux entrailles du modèle en observant ces groupes, peut réfléchir à s’ils sont possibles, et si oui comment. Il n’y a plus une probabilité fétichisée mais un ensemble d’avenirs à la vue de tous. En bout de téléphone arabe, les médias (indépendants et socialisés évidemment) partagent les caractéristiques de ces scénarios, qui stimulent la discussion plutôt que l’apathie et le défaitisme. Et s’il n’y a pas de solution possible dans le système économique actuel, on en prend note et on le change.

Conclusion

L’ACV a le défaut d’avoir énormément de qualités. En cela, elle bénéficie d’une forme d’ effet de halo , ce biais cognitif qui nous fait attribuer de nombreuses qualités supplémentaires à quelque chose qui en a démontré simplement quelques-unes. Parce que l’ACV a en effet une capacité à quantifier de nombreuses composantes du système à l’interface entre anthroposphère et écosphère, on est amené à fétichiser ses résultats comme des attributs réels des produits et des décisions étudiées. Les nombres hypnotisent, donnent une impression de véridiction, et la multitude de ceux-ci et l’étendue des procédés modélisés donnent une impression de complétude. L’ACV apparaît alors comme l’analyse systémique par excellence. Si elle prend « tout » en compte, alors pourquoi faire autre chose ? En plus, elle est relativement simple pour une approche qui prend tout en compte, on peut même en faire encore plus rapidement avec l’IA… Elle pourra alors fournir à foison des millions de nombres, pour des dizaines de cahiers des charges différents, et le même nombre de régulations sectorielles, remplissant le supermarché des régulations du néolibéralisme et de sa gouvernance par les nombres. Elle construira des abstractions mathématiques cachées dans des densités de probabilités en y mélangeant avenir et devenir, politique et physique, éthiques et intérêts opposés. Elle permettra de témoigner des petits pas faits dans la bonne direction, des petits pourcents d’impacts grapillés sur la production d’un gadget inutile ayant alors mérité son label vert, ce qui permettra d’en produire 10 fois plus, compensant 1000 fois le « gain environnemental » initial. Une telle utilisation de l’ACV doit être largement questionnée, et combattue dans notre pratique.

La quantification par l’ACV est oppositionnelle quand elle est démystifiée, vulgarisée, popularisée, et qu’elle participe à créer une nouvelle compréhension du monde, éclairant les mécanismes de mort et de souffrance dans la société et travaille à les détruire( XX cf autre article). L’astronomie et la physique nous ont fait abandonner le géocentrisme et les dogmes qu’il soutenait, l’écologie et la biologie nous ont fait prendre conscience de notre place au sein du vivant, la sociologie nous a montré la fausseté des mythes politiques simplistes. L’ACV et les sciences de la modélisation pour la soutenabilité doivent faire apparaitre le continuum entre société et environnement, entre production, décisions, et souffrances infligées. Elle doit aussi prendre sa place dans la gouvernance par les nombres, mais surtout rentrer dans la lutte pour les nombres. Une lutte fondamentale pour le choix des indicateurs que nous choisissons de regarder, et ceux que nous choisissons d’ignorer. La lutte politique est en grande partie une lutte pour le choix des indicateurs qui définissent la bonne santé du monde.

Sans ça, les qualités de l’ACV la rendent aussi tout à fait mobilisable pour soutenir une marche du monde à grands pas technosolutionnistes et destructeurs.

Alors oui, elle peut limiter la casse, de la même manière qu’une politique d’accompagnement du capitalisme allège temporairement la tension sociale, mais elle apparait aussi aujourd’hui comme un outil pour amender marginalement une dynamique inhérente au capital. Dans son discours lors de la prestigieuse Richard T Ely Lecture(Duflo, 2017), Ester Duflo, récompensée par le prix de la banque de Suède, suggère que l’économiste doit maintenant se considérer comme un

plombier. Il ou elle doit en partie délaisser la théorie pour s’intéresser à la mise en place pratique de solutions efficaces sur des problèmes localisés, concrets, comme déterminer la meilleure façon de verser une aide au développement dans une localité donnée. Une connaissance spécialisée, empirique, utilisée par l’économiste devenu.e technicien.ne des flux du monde, mobilisé.e par les décisionnaires sur des questions d’applications de politiques données.

Je ne doute aucunement de la sincérité et de la compétence d’Ester Duflo dans son engagement pour la réduction de la pauvreté et des inégalités. Mais cette vision de plomberie me déprime, pas par rejet snob et adolescent d’une pratique moins légitime, moins grandiose que la « grande » théorie, mais parce que je pense que les dynamiques d’emballement dans les sphères sociales, politiques et environnementales sont telles qu’elles réduisent à l’insignifiance les travaux de rafistolage. L’ACV ne doit pas être un nouvel outil de la plomberie de la fin de l’Histoire. Si en tant que locataire, vous payez un loyer pour un taudis insalubre, moisi et menaçant chaque seconde votre santé physique et psychologique, et que votre propriétaire bon prince vous envoie un plombier pour simplement réparer l’évier, vous aurez raison de penser que ce plombier est ridicule, voire que c’est un enfoiré complice. Je n’ai jamais voulu être ce propriétaire, mais j’ai parfois peur de devenir ce plombier.

Références

Cereseto, S., & Waitzkin, H. (1986). Economic development, political-economic system, and the physical quality of life. American journal of public health, 76(6), 661–666. https://doi.org/10.2105/ajph.76.6.661

De Bortoli, A., Chanel, A., Chabas, C., Greffe, T., & Louineau, E. (2025). More Rationality and Inclusivity are Imperative in Reference Transition Scenarios Based on Iams and Shared Socioeconomic Pathways—Recommendations for Prospective Lca. Elsevier BV. https://doi.org/10.2139/ssrn.5195295

De Lagasnerie, G. (2025). Par-delà le principe de répression : Dix leçons sur l’abolitionnisme pénal. Flammarion.

Duflo, E. (2017). The Economist as Plumber. American Economic Review, 107(5), 1‑26. https://doi.org/10.1257/aer.p20171153

Engels, F. (1878). Anti-Dühring: M. Eugen Dühring bouleverse la science.

Funtowicz, S.O., Ravetz, J.R. (1990). Epilogue. In: Uncertainty and Quality in Science for Policy. Theory and Decision Library, vol 15. Springer, Dordrecht. https://doi.org/10.1007/978-94-009-0621-1_16

Funtowicz, S. O., & Ravetz, J. R. (1993). Science for the post-normal age. Futures, 25(7), 739‑755. https://doi.org/10.1016/0016-3287(93)90022-L

Jouannais, P., Blanco, C. F., & Pizzol, M. (2024). ENvironmental Success under Uncertainty and Risk (ENSURe) : A procedure for probability evaluation in ex-ante LCA. Technological Forecasting and Social Change, 201, 123265. https://doi.org/10.1016/j.techfore.2024.123265

Jouannais, P., Marchand-Lasserre, M., Douziech, M., & Pérez-López, P. (2025). When is Agrivoltaism Environmentally Beneficial? A Consequential LCA and Scenario Discovery Approach. Environmental Science & Technology, acs.est.5c04479. https://doi.org/10.1021/acs.est.5c04479

Knight, F. H. (1921). Risk, Uncertainty, and Profit. Houghton Mifflin. https://doi.org/10.34156/9783791046006-108

Konradsen, F., Hansen, K. S. H., Ghose, A., & Pizzol, M. (2024). Same product, different score : How methodological differences affect EPD results. The International Journal of Life Cycle Assessment, 29(2), 291‑307. https://doi.org/10.1007/s11367-023-02246-x

Lena, H. F., & London, B. (1993). The Political and Economic Determinants of Health Outcomes : A Cross-National Analysis. International Journal of Health Services, 23(3), 585‑602. https://doi.org/10.2190/EQUY-ACG8-X59F-AE99

Lénine, V. I. (1917). L’État et la révolution.

Liu, P. R., & Raftery, A. E. (2021). Country-based rate of emissions reductions should increase by 80% beyond nationally determined contributions to meet the 2 °C target. Communications Earth and Environment, 2(1), 1‑10. https://doi.org/10.1038/s43247-021-00097-8

Marx, K. (1845). Theses on Feuerbach.

Mespoulet, M. (2012). Instrumentation statistique et action de l’État : Le cas soviétique: Éducation et sociétés, n° 30(2), 89‑106. https://doi.org/10.3917/es.030.0089

Navarro, V. (1992). Has Socialism Failed? An Analysis of Health Indicators under Socialism. International Journal of Health Services, 22(4), 583‑601. https://doi.org/10.2190/B2TP-3R5M-Q7UP-DUA2

Priewe, J. (2020). Why 60 and 3 percent ? European debt and deficit rules—Critique and alternatives, IMK. In IMK Study, (Numéro 66). Institut für Makroökonomie und Konjunkturforschung (IMK), Düsseldorf. https://nbn-resolving.de/urn:nbn:de:101:1-2020052612570065992221

Raftery, A. E., Zimmer, A., Frierson, D. M. W., Startz, R., & Liu, P. (2017). Less than 2 °C warming by 2100 unlikely. Nature Climate Change, 7(9), 637‑641. https://doi.org/10.1038/nclimate3352

Sacchi, R., Terlouw, T., Siala, K., Dirnaichner, A., Bauer, C., Cox, B., Mutel, C., Daioglou, V., & Luderer, G. (2022). PRospective EnvironMental Impact asSEment ( premise ) : A streamlined approach to producing databases for prospective life cycle assessment using integrated assessment models IMAGE. Renewable and Sustainable Energy Reviews, 160(April 2021), 112311. https://doi.org/10.1016/j.rser.2022.112311

Saltelli, A., Bammer, G., Bruno, I., Charters, E., Di Fiore, M., Didier, E., Nelson Espeland, W., Kay, J., Lo Piano, S., Mayo, D., Pielke, R., Portaluri, T., Porter, T. M., Puy, A., Rafols, I., Ravetz, J. R., Reinert, E., Sarewitz, D., Stark, P. B., … Vineis, P. (2020). Five ways to ensure that models serve society : A manifesto. Nature, 582(7813), 482‑484. https://doi.org/10.1038/d41586-020-01812-9

Sareen, S., Saltelli, A., & Rommetveit, K. (2020). Ethics of quantification : Illumination, obfuscation and performative legitimation. Palgrave Communications, 6(1), 20. https://doi.org/10.1057/s41599-020-0396-5

Serna, P. (2019). L’extrême centre ou le poison français : 1789–2019. Champ Vallon.

Supiot, A. (2015). La gouvernance par les nombres. Fayard.

Stirling, A. (2010). Keep it Complex—4681029a. Nature, 468(1031).

Thompson, E. L., & Smith, L. A. (2019). Escape from model-land. Economics, 13, 1‑15. https://doi.org/10.5018/economics-ejournal.ja.2019-40

van der Giesen, C., Cucurachi, S., Guinée, J., Kramer, G. J., & Tukker, A. (2020). A critical view on the current application of LCA for new technologies and recommendations for improved practice. Journal of Cleaner Production, 259. https://doi.org/10.1016/j.jclepro.2020.120904

Weidema, B. P., Pizzol, M., Schmidt, J., & Thoma, G. (2018). Attributional or consequential Life Cycle Assessment : A matter of social responsibility. Journal of Cleaner Production, 174, 305‑314. https://doi.org/10.1016/j.jclepro.2017.10.340